IT Ops tax: Death by a thousand cuts

Date: March 3, 2021

Category: The CTO Perspective

Author: BigPanda

Sub-optimal IT operations cost much more than meets the eye, as poorly performing services have many cost implications other than revenue loss.

Join us in a CTO Perspective discussion with Jason Walker, Field CTO at BigPanda, to learn why untethered IT Ops tax can mean death by a thousand cuts.

Read the skinny for a brief summary, then either lean back and watch the interview, or if you prefer to continue reading, take a few minutes to read the transcript. It’s been lightly edited to make it easy for you to consume it. Enjoy!

The Skinny

There are many hidden costs involved in running sub-optimal IT operations, which most organizations don’t consider. Most enterprises look at their NOC/Command Center as the primary cost, and service downtime as their only KPI, but that is really only the tip of the iceberg. Without a properly operating incident management lifecycle, enterprises tend to support poorly performing services instead of fixing them. By doing that, they are unwittingly:

- Growing costs stemming from dealing with all the incidents that these services are creating on an ongoing basis

- Growing CS teams to support badly functioning features

- Pulling DevOps teams off of innovation and into supporting growing incident management efforts

- And more.

And all this “tax” isn’t being counted or attributed to operations.

The solution? Standardizing monitoring, incident, change management and reporting – through dedicated service onboarding processes and tools. And then, killing sprawl and poorly performing / legacy services – to create a streamlined, central operation. Watch the interview to learn how to do just that.

The Interview

The Transcript

Yoram: Hello and welcome to the CTO Perspective, where we discuss unique perspectives about the most current issues in IT operations. And here today with us is, once again, Jason Walker, former director of IT Operations at Blizzard Entertainment and currently Field CTO at BigPanda. Hi, Jason.

Jason: Hey, great to see you again.

Yoram: Great seeing you again, I’m always looking forward to our conversations. Today we’ll be talking about IT Ops tax. Or in other words, the hidden costs of running suboptimal IT operations. Most organizations look at their NOC and their command center as their primary cost, and service downtime as their main and often only KPI. But the reality is that that’s just the tip of the iceberg, isn’t it? There are often many other hidden costs that can grow to staggering amounts.

Jason: It is just the tip. And I think it’s really underappreciated across most organizations. Because if you think about IT operations, usually it’s a small operation center (or command center) that is running all the monitoring and the incident management workflows. But let’s go all the way out to the service level and consider that there are online services that are being consumed by customers or employees 24/7. And let’s say there’s an issue with one of those. And yes, your Ops team is there to try and detect it, and they use various monitoring tools to do that. But let’s say they don’t succeed in detecting it. That’s a critical thing. Maybe it happened because the monitoring isn’t there – there is not enough coverage or quality. What’s going to happen is that your service will have an issue, and it will come in via your customer service group. And they won’t be able to triangulate the different customer calls, and these calls will go on for some time, and be handled on an individual level. That means one ticket for each call.

Because your service will experience periodic issues, your CS team will slowly grow their headcount and resources over time to accommodate that the growing volume of tickets that they’re taking in.

They will actually build entire processes around doing that well. And then if you go one step upstream from that, your customer support will start getting user reports saying it looks like there’s a problem with this service. And they’ll also dive into it, executing whatever run books they have. This will take up time, and they will similarly scale their headcount, their resources, and build out processes to try to manage it from that perspective. And this continues further and further upstream.

Yoram: So what you’re saying is that we have some sort of suboptimal service, or something isn’t working as well as it should be, but because we don’t have the proper IT Ops in place, because we either have a lot of noise or are not monitoring everything properly, we don’t know that we’re not operating optimally. And then we’re building support and maintenance around something that is not working one hundred percent. We’re actually growing our teams to support something that’s a bit broken.

Jason: Absolutely. And you’re going to grow your teams. You’re going to add additional resources to accommodate whatever load you have.

Yoram: And why is this a hidden cost? Why aren’t organizations aware of this fact? Aren’t they measuring these things?

Jason: It’s very difficult to measure. Why do you need a team of a certain size? Why do you need to build out your application development team to seven resources instead of five, to manage that micro service?

I remember a specific value-added service that we ran on one of the major games at Blizzard and it would generate 12,000 tickets a month for our CS team, and around a thousand incident tickets a month for our IT operations team. And when you looked at that value-added service and went all the way upstream to when it was initially built out, you could see it was created based on a business necessity. It was a little bit of a rushed job. The engineers did the best they could with what they had at the time. But things like good monitoring, like an ability to control the inputs and outputs to that service, fail overs, redundancy, availability – all those things were short changed because new feature development, or new application development, is always the priority for the development team.

Yoram: So basically, you’re saying: This works, it doesn’t work well, but we’re used to it. Let’s keep it that way, not touch it. And because we’re wary of changes in processes and people, let’s just support it and try to do other stuff in the meantime. But we’re not aware of the actual cost of doing that, because the operations cost trickles down to CS, it trickles down to DevOps teams. And you’re paying this operational cost without knowing it.

Jason: Yes, because the customer support organization doesn’t usually report to the CIO, neither does the application development team in a lot of organizations, and so nobody ever connects those dots. And that was something that I saw us pay the price for.

We had a huge community. Our game teams had entire live operations production groups of eight to ten engineers, simply there for incident management. And it just trickled up through the organization. So, everyone’s team grew just a little bit bigger. Everyone’s team needed a few more software licenses. Everyone’s team ran a little bit slower in terms of velocity.

Yoram: And can you evaluate what cost that was in general numbers? I know it’s a difficult question, but we’re talking about the hidden costs…

Jason: Yeah. You know, half of the company at one point at Blizzard was CS: 2500 people. And over about a five-year period, they did a whole bunch of self-service improvements. They made a whole bunch of monitoring improvements. We did a whole bunch of incident management and operations improvements. We reduced that to 900 people.

If you think about that, that’s not tens of millions of dollars. That’s getting up towards 100 million dollars, because people are expensive, and they were distributed globally.

You need larger offices to support that amount of headcount. And that type of inefficiency is something that you arrive at over a long period of time, not something that you hire overnight and then can get rid of overnight.

Yoram: So, it’s a lot of individual things that accumulate, and altogether they’re causing this huge tax. You called it “death by a thousand cuts”. That was a nice way of presenting it.

Jason: It really is. Yeah.

Yoram: What is the right thing to do, to start raising awareness to this tax? And then what is the right thing to do, to start dealing with this tax?

Jason: I’m a data guy and so I always go back to the data. You need to tag, and label, and identify, and establish a good data standard across your organization, so that your CS organization, your IT operations organization, your IT engineering organization, your application development organization – are all identifying live operations work in the same way, using the same taxonomy. And you aggregate all of that in a single system (like Tableau, PowerBI, or any tool) over time, and watch the trend, watch how much you’re spending on live operations activity. Then you relate it to the signal to noise ratio that your IT operations has, and all the spend you have around monitoring tools, monitoring sources, incident management tools, incident workflows, automation efforts. And you watch the effect on that live operations burden, as you introduce process improvements, as you introduce self-service features for customers, as you introduce better change handling procedures, better configuration management. All those things will lower that tax. And you can see the result.

Yoram: It’s standardizing the data so you can compare things, and then using enrichment and putting everything together in one place, so you can make ties between things that are happening and the events that caused them. That way you truly understand how well you operate, and what the implications are?

Jason: Yes, of course. You measure what you care about and it’s very important to build the big picture and watch the trends over time, identifying the relevant changes that you’re making in your organization, your processes, and your tooling – and the effect that they have on that.

Because when you launch a new service or a new feature, it may create some additional customer interest and that generates revenue. That’s the focus of service development. But what does it cost to maintain vs. what does it bring in, in terms of revenue? Because that’s really your PNL, that’s really your cost benefit analysis.

Yoram: Maybe that would be a good place to talk a little bit about BigPanda and how it helps you do the things that you just mentioned.

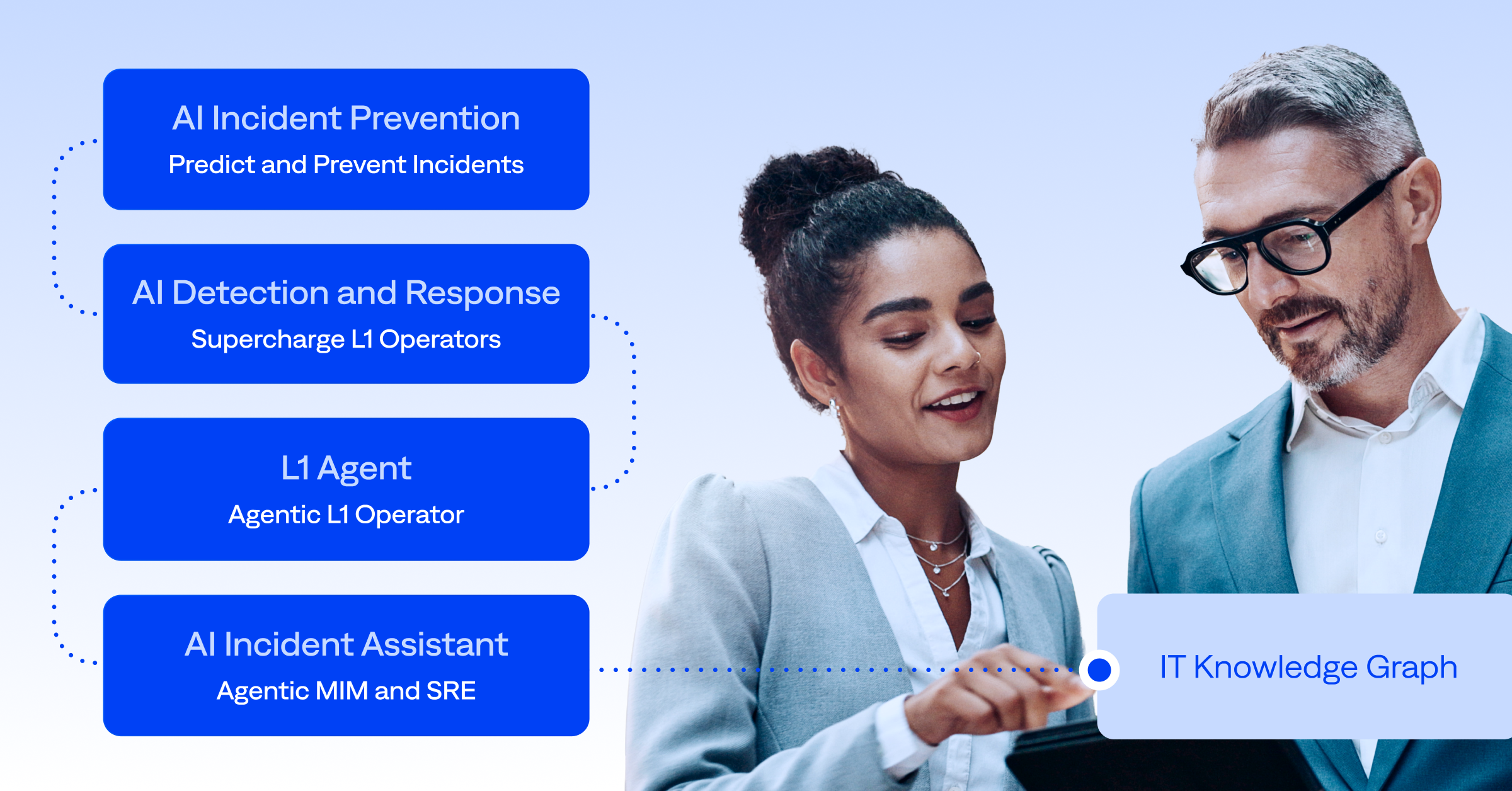

Jason: Of course. BigPanda Event Correlation and Automation platform is traditionally used by IT operations teams. What it does is bring in all your IT operations data and provide analytics around how that trends over time. The spend around incident management. How did these incidents come in? What was the source of the incident? How long did it take us to detect, to acknowledge, to assign and to remediate? You’re going to see the volume of incidents coming in from all your different sources, and you’re going to watch that over time. You’re going to know which of those were actionable and which of them weren’t. Which of them were maintenance related, which of them came in as user reports and were correlated later on to alerts. You’re going to be able to bring all of that together in one place, analyze it, control it over time, and hopefully, improve it as you as you make people, process and technology changes across your teams.

Yoram: How much of BigPanda was used to cut down from 2500 to 900 people in Blizzard?

Jason: That’s a great question. I would be hard pressed for a precise answer. CS had great leadership there. They went on an efficiency mission to lean out all their services. The application development, IT engineering, essentially everybody was united in the fact that, hey, it’s costing us too much to run our services. And so, it was an organizational effort. BigPanda did a great job of showing the progress over time and then really eliminating all the noise.

You reclaim your engineering time in application engineering and in IT engineering teams. And your SREs get back the time that they need to increase velocity. It’s incremental. It’s not slashing 50 percent of your budget out of the gate, but it does make a noticeable difference.

That’s where you get the positive feedback coming to your IT operations team as they kind of bask in the glow of “hey, we’re sending you a lot less noise and you’re feeling it. And as a result, you are very focused on the incidents that we do give you”. Which means everything runs faster and everything runs better. So, you get into this really nice, virtuous cycle.

Yoram: I think that’s eye-opening and a great place to end. I want to thank you once again for your time. This has been great.

Jason: Yes, my pleasure and always good talking to you. I think there’s a lot more to dive into there, but hopefully we can get to that in a future session.

Yoram: I hope so, too.

Yoram: And if you want to hear more CTO perspectives or learn about the BigPanda platform, visit us at BigPanda.io. Thank you for watching and see you next time.