Root Cause Changes: are they the “Elephant in the NOC?”

Date: July 28, 2020

Category: The CTO Perspective

Author: BigPanda

Gartner states that over 85% of IT incidents are caused by changes in software and infrastructure. The experience of most enterprise IT teams corroborates that number. Yet enterprises still find it difficult to deal with changes and correlate them to the IT incidents they may have caused.

In this CTO Perspective we talk to BigPanda’s CTO Elik Eizenberg about Root Cause Changes, changes in IT environments that cause service disruptions and outages, and why they are so difficult to deal with even though everyone knows they exist.

Read the skinny for a brief summary, then either lean back and watch the interview, or if you prefer to continue reading, take a few minutes to read the transcript. It’s been lightly edited to make it easy for you to consume it. Enjoy!

The Skinny

Ask any IT Ops practitioner what is the first question they ask when joining an emergency bridge call, and you’ll get the same answer: “What changed?”. Our customers report that changes in their IT environments cause 60% to 90% of the incidents they see.

Change is essential. It’s what allows developers to push code to production, to deliver new features and new capabilities. It allows innovation. Yet for all their importance, enterprises still find it difficult to deal with changes and correlate them to the IT incidents they may have caused.

Why? For several reasons.

First – the identification of the different types of changes that exist:

- Change management

- CI/CD pipeline changes

- Auto-orchestration changes

- Shadow changes – undocumented and often unintentional

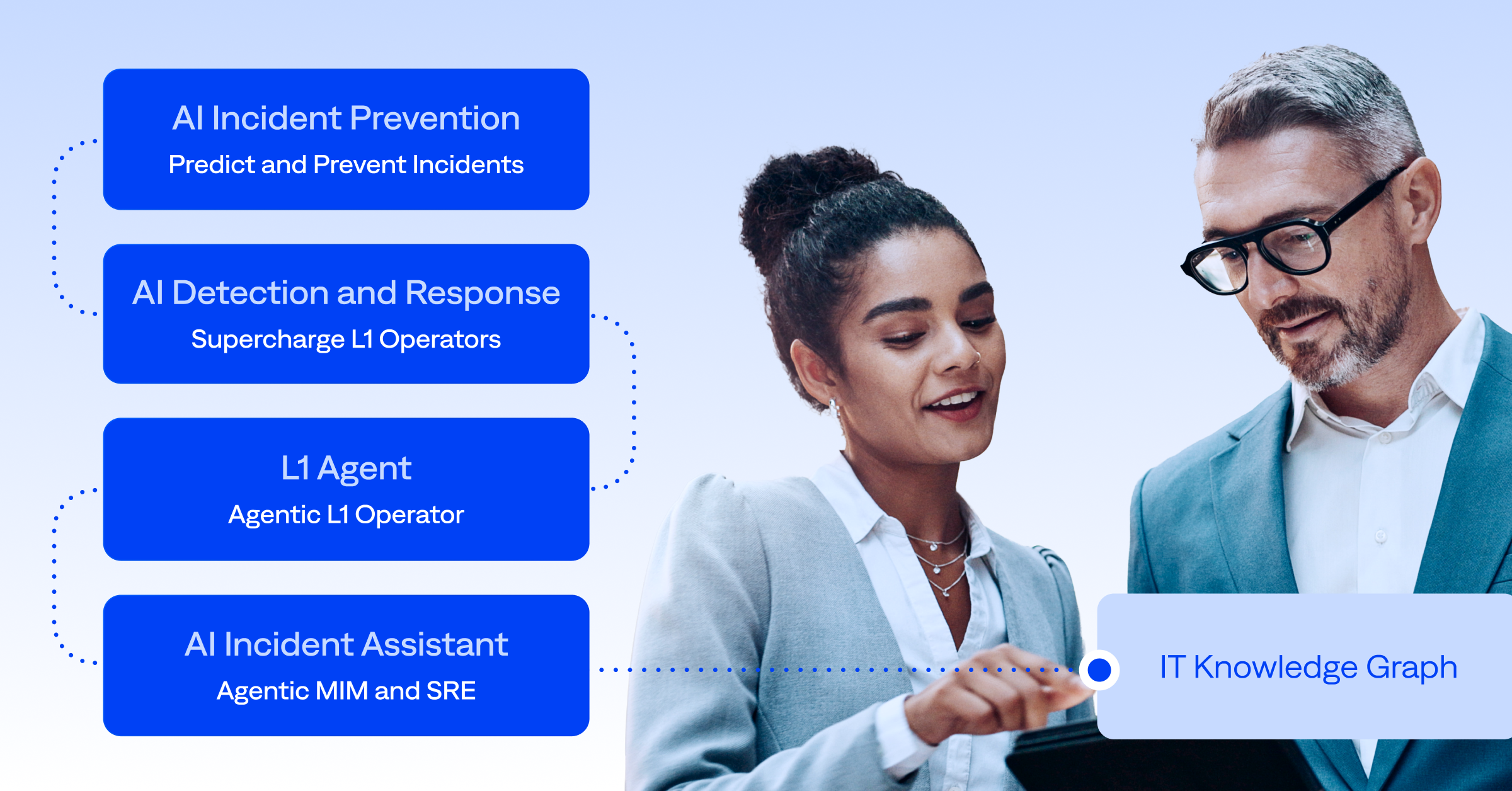

Second – the need for a full paradigm shift around how these changes are handled and how to tie them to incidents in production environments. This requires new tools and new thinking, involving data consolidation and explainable AI /ML.

What exactly are these different change types, and how exactly do we implement a new way of thinking? Watch the interview to find out.

The Interview

The Transcript

Yoram: Hello and welcome to our online conversations about the most current issues in IT operations with the most current people in IT operations. And today we have with us Elik Eizenberg, Chief Technology Officer and co-founder of Big Panda. Hey ELik.

Elik: Hi, Yoram. Really good to be here.

Yoram: Great to have you. In our conversation today, we’re going to talk about a topic that I know is close to your heart and is related to the latest release of Big Panda: Root Cause Changes: changes in software and infrastructure that cause service disruptions and outages. Let’s dive directly in: would it be fair to say that changes are one of the biggest challenges that IT operations face today?

Elik: Absolutely. Whenever something breaks in your IT environment, I would say most times it’s because something changed in your environment, someone changed a database setting, someone deployed new code, someone provisioned a new server. Someone changed something, and this is why it broke.

Yoram: So it’s no longer about hardware malfunctions. It’s about software.

Elik: Exactly. 10, 15 years ago, if you experienced an outage in an application, the prime suspects would have been network devices that malfunctioned causing network timeouts or packet losses, or maybe a storage array that was failing and creating corrupt data. But all this is no longer relevant with the cloud migration, with built-in fail-overs for hardware devices. So hardware is no longer the issue.

Actually, root causes are moving up the stack, into the application layer. And when they move into the application layer, the main cause of application breakage are changes. Someone has changed something and as a result, things are not working as they used to.

Yoram: And this is a big issue, isn’t it? Facebook had its longest mass outage back in March due to a server change somewhere. Google had it back in June. This is a really big issue.

Elik: Definitely. Some of our customers have done post-mortems of their recent outages, assigning every outage its root cause. We asked them what percent of their root causes were changes to their environments, and the range we got back was that 60 to 90 percent of their incidents were caused by changes. Gartner actually provides an estimate that eighty five percent of incidents can be traced back to changes in the customer’s environment.

Yoram: Eighty five percent of outages are related to changes! But changes are a crucial part of the way that we work today, aren’t they? Today we’re talking about fast moving IT, about constantly developing, constantly changing. It’s part of our life. It’s not something that we should try to avoid.

Elik: Change in itself is awesome. Change is what allows us to push code to production, which means that we deliver new features and new capabilities to our end users and customers. It allows us to deliver innovation. It allows us to adapt our IT environment to our business needs.

Changes on their own are amazing. Changes allow us, modern companies, to move fast and to react to our competitors. The problem is that while changes are really effective in driving all of these things we discussed, they also create many production issues, a lot of outages and incidents. And this is where it becomes a real challenge for operations teams and DevOps teams.

Yoram: But we can’t avoid them, can we? We all know that every enterprise has planned changes. This is common practice for many years now, planning changes moving forward.

Elik: That is true. In the past, we had a kind of “change approval board”, so when once a week you’d have a maintenance window planned for 5 p.m. on a Monday, it would have been approved within the change management process. That used to work 15 years ago. But today we have so many other sources of change, changes that occur outside of the change management process, and it’s become really hard to control changes. And honestly, I don’t think I would advise any customer to try to control change. What you need to do is embrace change and find ways to keep the uptime of your system without stopping change.

Yoram: These other sources of changes that you’re talking about – do you mean automatic scaling, and continuous delivery? Would these be considered changes?

Elik: Definitely. I like to classify four types of changes in most environments.

- The first type is change management, which we have just discussed. This still exists in most enterprises. A big portion of your changes are going to go through the change management process.

- Number two is the CI/CD continuous delivery and integration pipeline, where engineers essentially push code to production thousands of times a day.

- Number three is what I call auto-orchestration. For example – you may be deploying a fleet of 50 containers in your Kubernetes, and they get orchestrated automatically without human intervention, no one is involved in the process of deploying these new servers. Or you might have auto-scaling: for example a high load on a business application behind a load balancer, and now the cloud automatically provisions five new instances of that application to handle the load.

- And then number four is what I call shadow changes. These are essentially all the changes that occur in your environment without your knowledge. They don’t go through CI/CD. They don’t go through change management. They’re not part of the orchestration process. They may happen because someone connected to a server and made a change or someone connected to your DNS and made a change. These changes are often the root cause of issues, and you have to find a way to get visibility into them as well.

Yoram: These shadow changes sound scary! I think there’s a separate conversation that we could have about them. So what you’re saying is that change is in essence the way we work. Everybody’s experiencing outages because of changes. Large companies, small companies, we all know about changes. We all know the dangers. And yet for some reason, they are sort of like the elephant in the NOC. Change is still the number one challenge in today’s IT operations. And we’re not really solving it. Why is that?

Elik: I think a big part of modernization has involved reinventing the historical paradigms of doing things. We have reinvented how we handle hardware and network devices. We moved to the cloud, we moved to containers. That’s a big paradigm shift. And we’ve done the same with software. We moved to micro services from large monolithic applications, and we utilize CI/CD instead of maintenance windows.

A lot has changed in how we orchestrate systems, how we deploy software. But unfortunately, the paradigms that we use to operate those environments are still the same paradigms we used 15, 20 years ago. And to succeed, we need a full paradigm shift around how we handle changes and how we tie changes to incidents in production environments. That requires new tools and new thinking.

Yoram: So we’re not working with the right tools, is that you are saying? The systems have moved forward to the 21st century, whereas the tools that operate them and deal with changes are stuck somewhere in the past. So how do people tackle changes today?

Elik: The most extreme example I’ve seen, is a big insurance company here in the US. What they’re doing every morning before their workday starts, is to print out their change management logs, anything that is a planned change for that day, on a big sheet of paper. And they put this sheet of paper next to every operator in their NOC. What they tell them to do is when they have an incident in a production environment, they should go through that sheet of paper, and try to identify which change, if any, might be the root cause of that incident. And hopefully that will help them identify that change and resolve the incident as quickly as possible. The big challenge is, of course, sifting through the hundreds if not thousands of changes in this sheet of paper. That’s just not doable. Not to mention the fact that this sheet of paper is often out of date, as there may be many changes from the different sources we discussed which are not represented in that one sheet of paper.

Yoram: And you have to do it every day, over and over again. That sounds pretty much undoable. So what is BigPanda’s approach to changes? You’ve said that you need a change in the way of thinking, a change in the paradigm. How does BigPanda approach change?

Elik: It’s two things that we essentially do. The first is consolidation of data. We essentially subscribe to all the different change feeds that you have in the organization. We subscribe to your change management. That’s a great feed of data, but as we mentioned it’s maybe 50 percent of your changes. Then we also subscribe to all of your CI/CD pipelines. If you have multiple ones like Jenkins, BambooCI, TeamCity – we subscribe to all of these. We take in all the CI/CD changes. We also subscribe to your cloud auditing tools like Azure Monitor and CloudTrail from AWS. So we see all the different changes in your cloud environments. We also connect to your orchestration tools like Ansible, Terraform, Chef, Puppet, and we see all the auto-orchestration changes that occur in your environment and we centralize all of them in one place.

Yoram: So you’re collecting all the information from all the the different change tools and presenting them in one screen where somebody can actually look at them and see how they are related to an incident or an alert.

Elik: Exactly.

That’s the first part: centralization of data in one place, which no one really does today, and that creates a big gap in visibility. But the second thing you realize once you centralize the data is that you actually have thousands, if not tens of thousands or even hundreds of thousands of changes every day. So another big part of the challenge is how to look at all the incidents you have every day, and match them with the appropriate changes that caused them.

That on its own is a big problem. This is where we introduced AI and Machine Learning (ML) technology to automatically identify which changes could be the root causes of incidents. And we automatically surface these suspicious changes to the operators and to the DevOps teams, saying here are the changes that have likely caused this incident.

Yoram: So first of all, you’re collecting all the changes from all the tools, which nobody else is doing today. And then you use AI and ML to surface which of them are the root cause changes. What would you say to people who say, well, we’ve heard about AI and ML and we don’t trust them that much?

Elik: Good point. I think a traditional AI systems will essentially tell you “We think this is the root cause. We have no idea why, or we know why but it’s represented in this huge matrix of numbers in a neural network or decision tree. And there is no way for you, Mr Operator, Mr Director of IT, Mr Application Developer – to understand why we arrived at this conclusion”. So it’s very hard for you to trust the system and drive your actions based on that system’s decision.

What we do is employ a system called Open Box Machine Learning.

When you see a change that was marked as a suspicious change in your environment, we actually explain to you why we think it’s the root cause. The system articulates the reasoning behind its recommendation. And that allows you, the operator, to consume the decision and drive real action, you get real insight on top of the decision itself.

This is very unique to our technology, and it drives a lot of trust and transparency, which are required in my opinion to adopt a modern AI tool.

Yoram: So what you offer the engineers is first collecting everything and putting it on one screen. Second, is surfacing the probable root cause and then, third, also explaining it to them, why you chose it, and providing transparency into the decision, so as to have them trust the system.

Elik: That’s a perfect way to put it.

Yoram: That sounds pretty awesome. I think the natural next question would be: this has been launched, does it work?

Elik: Very much so. It was launched a couple of weeks ago. We’ve tested it for almost a year now with many beta testers from some of the biggest enterprises in the world. They report not only that the technology works, but also that it actually drives real business value. They actually see a reduction in time to resolution, an improvement in their uptime. They see their SLAs improving. Their SLOs improving. So it actually works in some really complex real world environments. And honestly, we invite everyone to do a proof of concept with Big Panda. You can actually test this based on your real data. See that the proposed root cause changes are actually real root cause changes that drive real value to your organization.

Yoram: That’s pretty exciting. I think you’ve peaked a lot of people’s interest right now, so maybe this would be a good place to stop and leave a call to action at the end. Some sort of suspense. I want to thank you so much for your time and being here.

Elik: Thank you, Yoram. I really enjoyed this.

Yoram: I enjoyed this as well. And for all of you who are still watching, thank you for bearing with us. If you want to learn more about root cause changes or BigPanda in general, please watch our other videos or go to BigPanda.io where there’s a lot of great content about the system.

Until next time, see you later.