Agentic ITOps is here. Here’s what early movers are doing.

We recently brought together IT operations leaders from across financial services, healthcare, airlines, media, and other industries for BigPanda 26, our annual customer event. The theme that emerged above all others during the event’s conversations is that our industry is no longer debating whether AI belongs in ITOps. The debate now is about how quickly it can be implemented, how to measure it, and who’s accountable when it acts.

Here are some key learnings from BigPanda 26.

Your CMDB doesn’t need to be perfect to get started

For years, the assumption was that automation required perfect data. Clean the Configuration Management Database (CMDB), normalize every data source, and get the foundation right before you do anything else. That multi-year data preparation project became the reason automation stalled, and the $250 billion problem of human-driven IT operations never got solved.

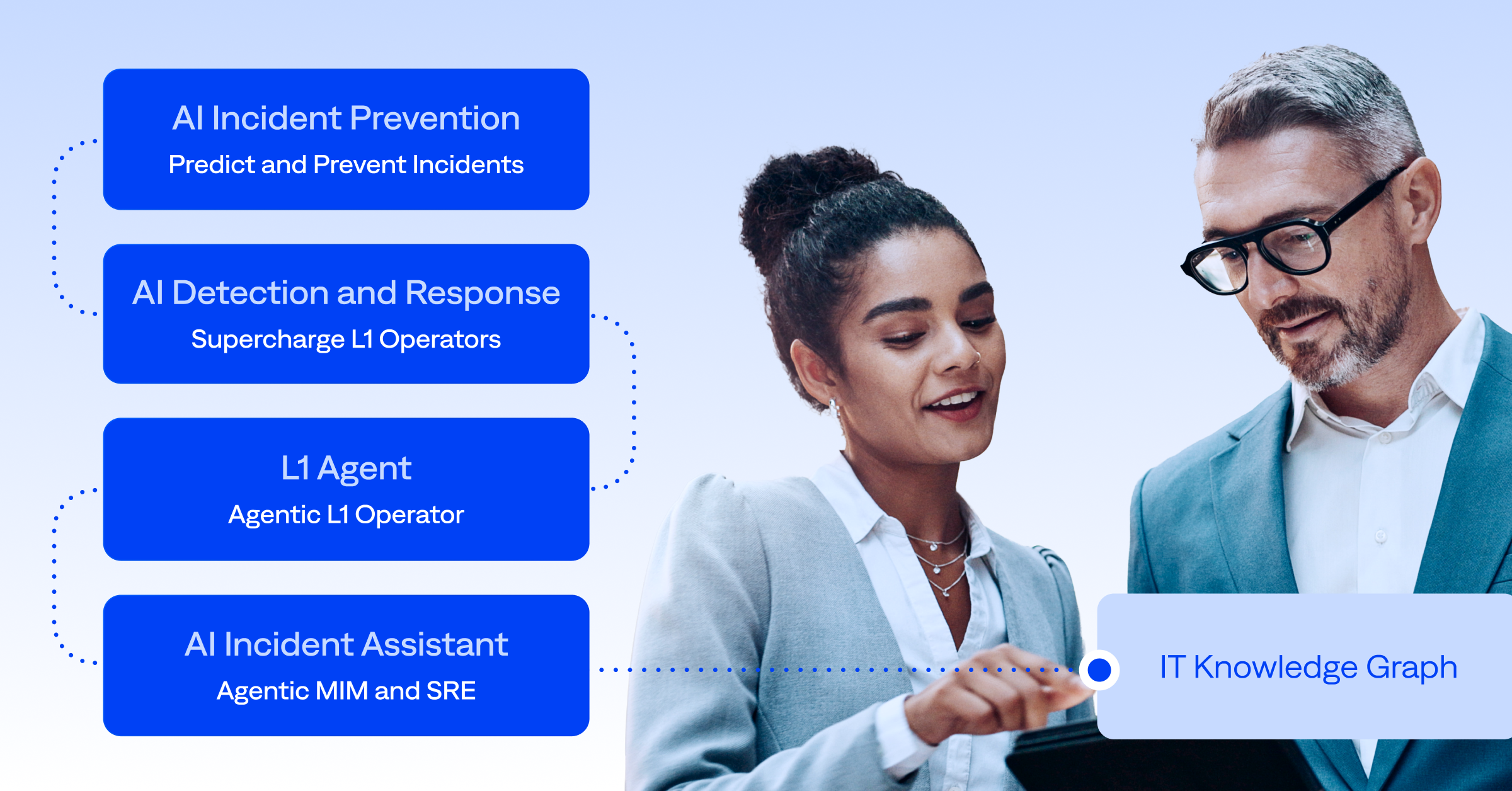

The leaders we met with at BigPanda 26 recognized this ceiling immediately, with one financial services executive squarely stating that his organization no longer cares only about CMDB quality. Instead, his priority is building an IT Knowledge Graph, which harnesses knowledge and context across the business and augments the data stored in their CMBD.

The collective mindset of those already using AI agents is that the best path is to start using the technology immediately. Don’t wait. Our customers across financial services, insurance, and hospitality shared similar experiences. Value showed up faster than expected, and the data improved as a byproduct of using the platform, not as a prerequisite.

That’s the shift agentic AI makes possible. Instead of treating data quality as the gatekeeper, it treats it as an output. AI can observe patterns, infer relationships, and fill in gaps through daily operations. Every incident it touches strengthens the knowledge layer. The CMDB becomes one input among many, but no longer the thing everything else depends on.

The enterprises moving fastest aren’t the ones with the cleanest data. They’re the ones who stopped waiting for it.

Tribal knowledge remains hidden across the enterprise

What makes agentic AI fundamentally different isn’t just speed or scale. It’s access to context that automation never had before.

The knowledge required to resolve most incidents already exists inside the enterprise, locked away in incident records, change logs, post-mortems, and architecture diagrams. However, most of it lives in people’s heads. The engineer who remembers a failure pattern from 18 months ago, the system dependency that never made it into the CMDB, the escalation preferences everyone knows but nobody documented. Rules-based automation couldn’t touch any of it, but agentic AI can.

Leaders across industries are concerned that critical operational knowledge is locked inside engineers who are retiring, disengaged, or too busy to document what they know. One leader we spoke to made the stakes concrete: when the AI can’t resolve an incident, it signals that the underlying knowledge is outdated. That’s not a failure state, it’s a diagnostic.

Agentic AI turns this liability into an asset. AI observes how engineers respond to incidents, inferring undocumented relationships, and surfacing recommended updates for human review. This allows AI agents to build a knowledge layer through daily operations and get smarter because of real-world messiness, not despite it.

The desire to automate is strong, but trust isn’t there yet

With a rich knowledge layer in place, the conversation shifts from capturing institutional knowledge to acting on it. That’s where L1 automation becomes a reality, but the leaders furthest along the automation adoption curve are clear that trust is built incrementally, not assumed.

The most commonly agreed-upon approach at BigPanda 26 is to start with the agent as an observer and recommender before it becomes an actor. One airline’s team wants to surface three various resolution options to the on-call engineer before anyone gets on a bridge call. The human still makes the decisions, only faster, with better context, and without pulling a room full of people into a 2 am war call.

As one manufacturing leader described it, “I have my hand on the wheel, but I’m testing the trust and loosening my grip.” The lessons here are to start with repeat incidents where outcomes are predictable, keep novel P1s in human hands, and let the agent’s scope expand as its track record builds.

Starting narrow lays the foundation for building institutional confidence that later unlocks broader autonomy. The framing that resonated most is treating the BigPanda L1 Agent as the junior engineer. It handles high-volume, repeatable work and escalates cleanly when it reaches the edge of its knowledge. That’s not a limitation. That’s exactly what you’d want from someone in their first week, and exactly how trust gets built.

Agentic ITOps is driving the shift from reactive to proactive operations

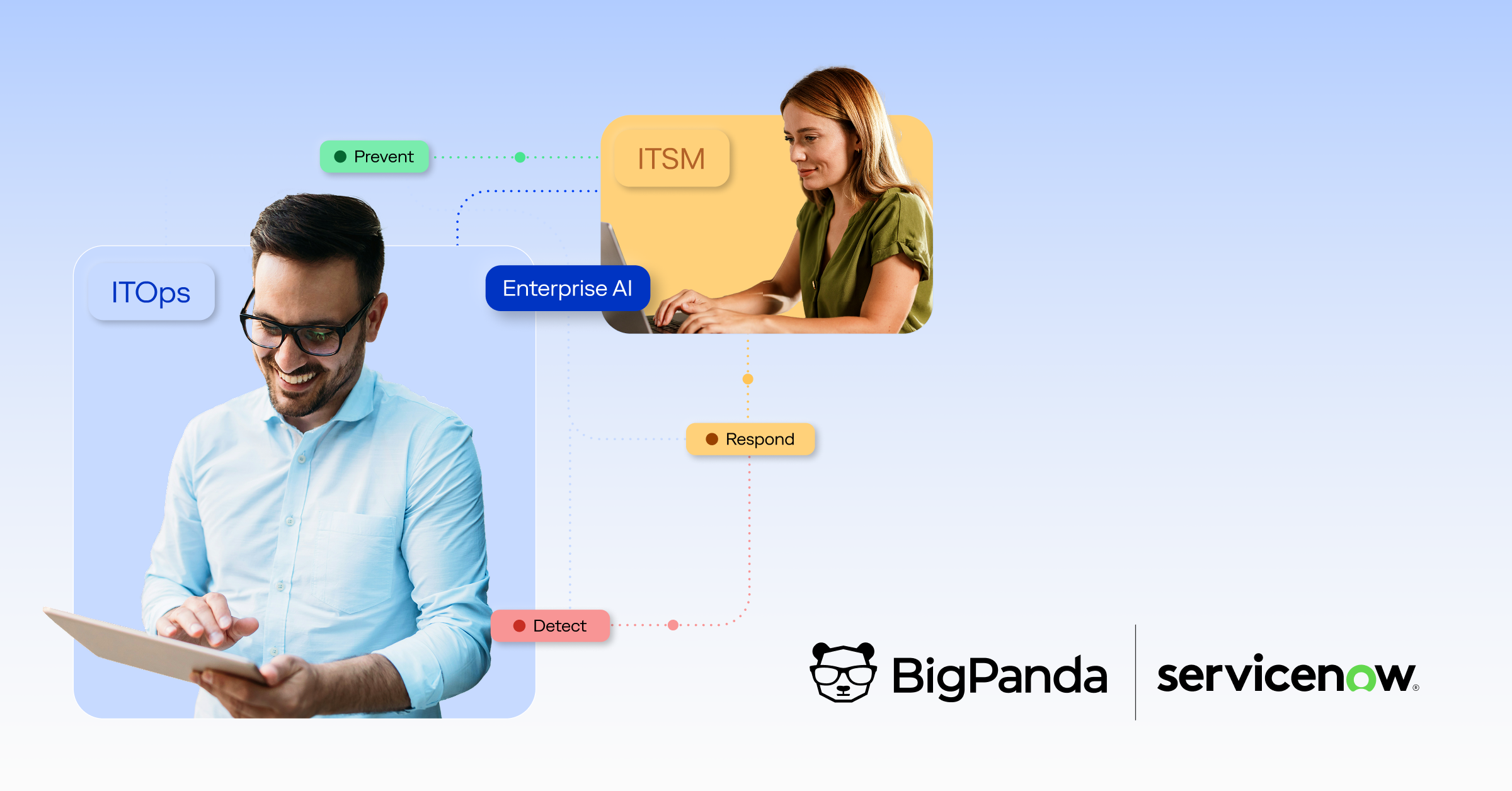

These capabilities in total unlock the long-sought-after goal of proactive issue detection. Enterprise IT leaders have been seeking this elusive goal for many years, but now, it’s a reality. AI can continuously scan the environment and surface anomalies before they become incidents. Leaders described getting to this state as an urgent priority.

The teams that are furthest along share a common trait: they’ve stopped thinking about AI as a feature inside an existing workflow. Instead, they’re building workflows around the AI. The L1 Agent isn’t a tool that fits into the old NOC model; it’s the foundation of a new one.

That shift won’t happen overnight. But if BigPanda 26 made one thing clear, it’s that the leaders who will define enterprise ITOps in five years are already making the bets today.

Are you ready to make your bet? Request a demo or reach out to our team to see where you stand. If you’d like to learn more about BigPanda’s agentic IT operations strategy and hear the BigPanda 26 product keynote from our new Chief Product Officer, join our upcoming webinar on May 12.