The road to automation in IT Ops

Date: August 11, 2021

Category: Enabling the Business

Author: BigPanda

The buzz around automation continues to grow, in every industry, sector and vertical – and for good reason. By enhancing efficiency and effectiveness, automation enables even a very lean team to operate at an outsized level. In IT Ops, the impact can be instant and huge, with improvement measured in the tens or even hundreds of percent. This is thanks to the many aspects of operations that automation facilitates – including faster production, creation of new products and delivery of more services – and all in a stable, predictable, scalable way. And today, with AI and Machine Learning in the mix (aka AIOps), the possibilities and potential for automation are almost limitless.

So, how do you ensure your journey to automated IT Ops is streamlined and effective?

We recently sat down with Udo Strick, Lead Enterprise Systems Management Engineer at Waste Management Inc., and identified four guiding principles:

- Set good standards

- Reduce complexity

- Define and simplify processes

- Automate wisely

Set good standards – powerful and specific

In general, standards can be thought of as specifications and procedures that have been designed to make sure the materials, products, methods, or services people use every day are reliable. Standardization is particularly relevant to IT automation, which is itself a computerized implementation of a standardized process. Put bluntly, computers are dumb and lack creativity. They do only what they’re told, so we have to know exactly what we want them to do before they can do it. This is why only standardized processes can be automated successfully.

The key to optimizing automation is to create powerful standards that enable you to get the most out of your systems. These standards define how your systems communicate with each other, transfer information, look at and analyze data, etc.

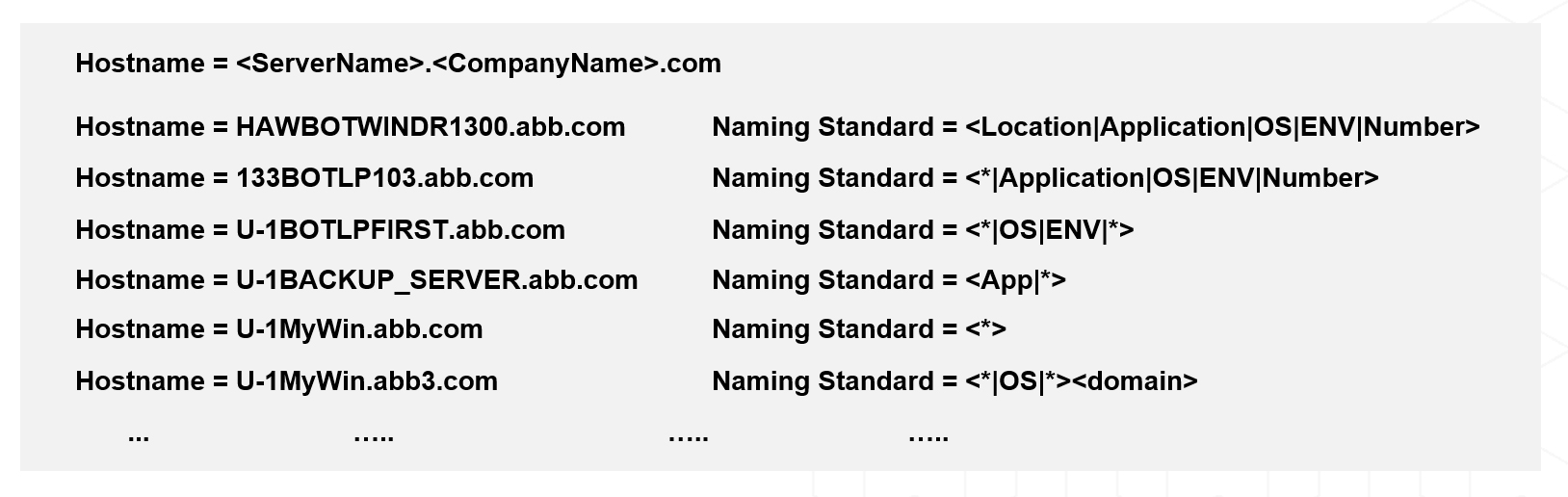

A good example is the standardization of naming conventions, which, in the enterprise IT world, is always something of a challenge. If there is no standard in place regarding what one system is sending across, then the receiving system will just be guessing what to do with information it is getting. And, if it can’t get the information it needs out of the data stream it receives, then a separate, manually-updated lookup table may be required, compromising the efficacy of the automation you wish to set up.

Consider, for example, the host naming standards illustrated in the image above. The more the naming standard is designed with your needs in mind, the easier it is for your systems to analyze the data, and pull out the critical information needed, such as the affected frame, geographical location, application served, etc. If used consistently across the organization, this standard becomes a solid bedrock for the assumptions that tools downstream can make about the data, and for the automation processes you put in place on top – for example, to issue automated alerts, response and remediation actions when a server has an issue.

Reduce complexity – keep only what you need

Complexity is inherent in the dynamic modern-day IT environments in which businesses operate, but that doesn’t mean that we should not try to reduce it wherever possible . A useful rule of thumb is that you should be able to sketch out or explain what your IT environment does and how it functions in around a minute. If you can’t, automation may just exacerbate complexity. So, when you contemplate automation, you should see it as an opportunity to take a step back, recognize areas of unnecessary complexity and identify what can be done to reduce it. To do this properly, you need to make sure you talk to the people that are doing the work on the ground to find out about their actual experience.

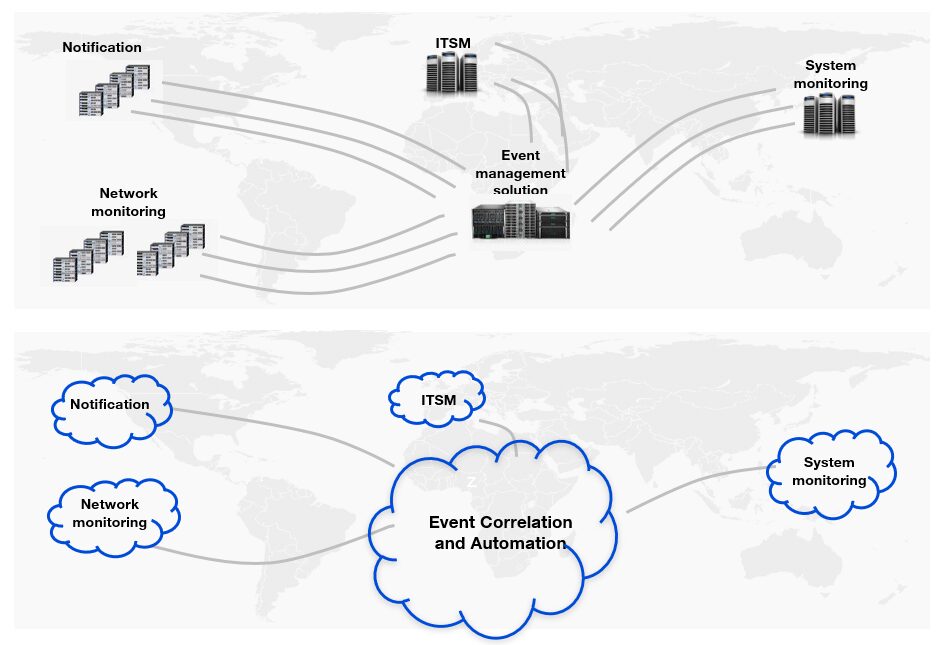

In the diagram below, we see an example of how complexity can be dealt with by moving to a SaaS environment. Operating many systems on-premise that need to be managed and maintained comes at a huge cost – both financially and in terms of efficiency. By moving to a SaaS environment, rather than having to maintain hardware, the operating system, bug fixes and patches, network bandwidth, firewalls, load balancers and the on-prem application software itself, you just need to take care of one thing only: the configuration of your SaaS apps!

Tool rationalization is another good example. By reducing the number of tools you work with, you reduce the complexity of your operations.

Now, you can focus your automation efforts on taking care of simplified tasks, saving time and reducing overhead in the long term.

Define and simplify processes – more intelligence, fewer steps

Similar to the previous point, simply automating complicated or bad processes can lead to more complication and overhead. To avoid this unfortunate outcome, you need to begin by identifying the simplest route between your available input and the goal output, free from the baggage of past decisions and tradeoffs. This fresh assessment will direct exactly what your automation will be doing for you in the future. It is here also that all the work you’ve done in the previous two steps – standardizing and reducing complexity – really pays off, since it allows you to simplify your processes even more.

By defining the processes that are important to your IT Ops team or workflows, you can make sure that they are simple, efficient and robust.

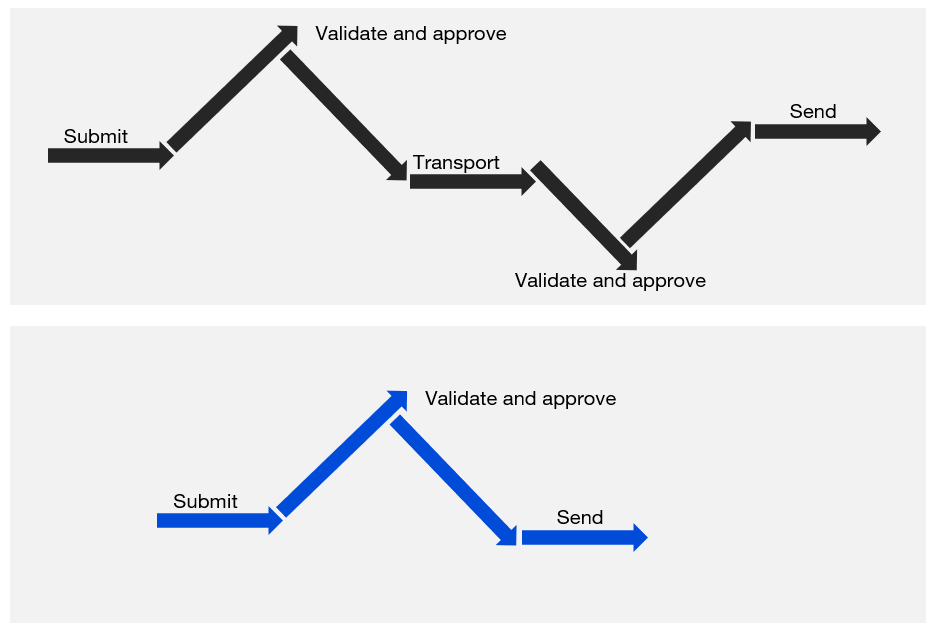

Questions to ask yourself as you do this include: Is this process actually making work easier and more efficient, or is it causing more problems than it solves? Is there a step along the way that is taking too long? What can we do to clear any bottlenecks? Is there any part of our processes that is being unnecessarily duplicated and can be eliminated (as in the diagram below)? What intelligence can we put up front, to minimize the number of follow-up steps required?

This stage is absolutely critical because, as automation scales up our operations, it doesn’t just multiply what we have been doing well with our manual processes; it also multiplies any problems, glitches or defects. So, it’s best to head them off at the pass.

Automate wisely – choose the tools that best fit your needs

Our last guiding principle concerns automation itself. This is where we realize the true value of the previous three principles – in short, it’s where the magic happens. So, take your time to wisely select the tools you use to implement your automation.

As much as we try to keep everything simple, IT environments will always remain noisy, complex and fast moving.

The key to developing resilient automation is to implement technologies that enable us to deal with these inevitabilities as best we can – and it is here that AIOps shines.

Understand what goals you aim to achieve with your automation – and ask what the AIOps platforms you are considering can do for you from that perspective:

Can they help you with your naming conventions? Are they suited to working both on-prem and in the cloud? Can they easily integrate with your existing tools? Will their communication capabilities adequately support the processes you are aiming to put in place? Can they add the information to alerts through enrichment? Do their AI and ML provide you with adequate flexibility and transparency to implement your tribal knowledge?

These and other questions are important to make sure you are properly equipped as you begin your automation journey. And, If you’ve done a good job in instrumenting, you’ll get actionable data from the automated process as it runs, and over time you’ll identify areas for your team to further improve and simplify its flow.

Automation is the future of IT Ops, and not just because it makes your IT Ops workflows and teams more efficient. By taking care of mundane, repetitive tasks, it also elevates the human role, freeing up staff to do the more interesting, innovative parts of their job that can really drive your business forward. Following these four guiding principles, will help you safely navigate your automation process.

We invite you to watch our webinar with Waste Management to learn more.